Meta Reveals VR Headset Prototypes Designed to Achieve Visual Realism

Image courtesy of: Meta

- FRΛNK R.

- On June 22, 2022

Earlier this week, Meta CEO Mark Zuckerberg showcased several new prototype head-mounted displays (HMDs) that the company is working on, which aim to advance VR display technology to the point of being indistinguishable from the real world.

During a recent virtual “Inside the Lab” round table presentation, Zuckerberg, Reality Labs Chief Scientist Michael Abrash, and other members of the Reality Labs team shared a glimpse and some insight into what advanced VR technologies Meta is currently developing for the future.

The four experimental research prototypes, as seen in the video below, are each designed as a proof-of-concept focusing on solving issues such as higher resolution, focal depth, optical distortion, and high dynamic range (HDR). The goal, according to Zuckerberg, is to create a headset so advanced that it could eventually pass a “Visual Turing test,” which is a reference to Alan Turing’s imitation game, to see whether an artificial machine is capable of exhibiting intelligence equivalent to, or indistinguishable from, that of a human being. In this context, however, Meta wants its VR headset to display visuals that are so realistic that it convinces a user to believe they are viewing the real world.

“I think we’re in the middle right now of a big step forward towards realism. I don’t think it’s going to be that long until we can create scenes with basically perfect fidelity,” Zuckerberg said at the round table event.

As we build toward the metaverse, we’re researching how to develop a virtual reality display system where what you see in your headset is as vivid and detailed as the physical world. Mark Zuckerberg just shared the details here https://t.co/jinbBB4stF pic.twitter.com/toxEcYABT8

— Meta Newsroom (@MetaNewsroom) June 20, 2022

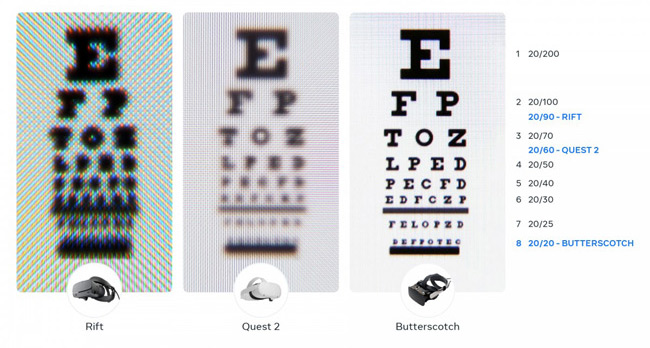

To tackle what Meta called “retinal resolution,” the company revealed a prototype headset codenamed ‘Butterscotch,’ which is said to offer about 2.5x as many pixels as the current Meta Quest 2 headset, allowing a user to read the fine print on a 20/20 vision line eye chart. By comparison, the Quest 2 supports 20/60 vision. To achieve the 55 pixels-per-degree (ppd) needed to boost the resolution, which is just slightly short of Meta’s 60 pixel-per-degree retina standard, the team had to condense the pixels over a smaller area, limiting the field of view to about half that of a Quest 2. The company says it also had to develop a new hybrid lens for the headset that would fully resolve the higher resolution.

Another key challenge is focal depth. Zuckerberg showed off the ‘Half-Dome‘ prototype, which uses eye-tracking and varifocal optics to tackle variable focal depths and allow users to focus on objects from any distance. The Half Dome series of prototypes, which Meta began building in 2017, initially utilized mechanical varifocal displays to dynamically change the distance between the display and the lens based on where the user was looking to deliver a proper depth of focus. In later iterations, the team moved to an electronic varifocal system by replacing all moving parts with a thin stack of liquid crystal lenses, resulting in a significantly more compact, silent, and reliable varifocal experience.

Zuckerberg notes that, unlike with traditional monitors that are a set distance away, in VR and AR, you need to be able to focus on things that are very far away but also clearly focus on objects that are very close to your face.

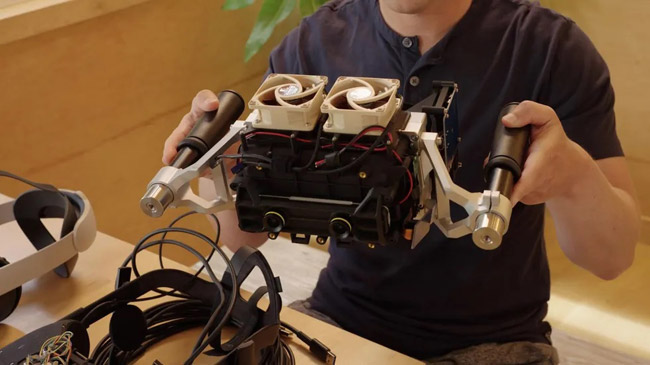

Then there’s the ‘Starburst’ prototype, which may very well be the first known VR headset to support high dynamic range (HDR), according to Zuckerberg. Meta’s latest VR headset, the Quest 2, can produce about 100 nits of brightness. Starburst, on the other hand, is said to achieve a peak of 20,000 nits, essentially making it one of the brightest HDR displays ever built. As a result, this would enable the user to experience colors and brightness levels as natural and realistic as they would in real-life. In its current form, Starburst is a tethered device that is way too bulky and heavy—requiring the user to hold it by a pair of handles to support its weight. “[Starburst] is wildly impractical to consider as a product direction for the first generation, but we’re using it as a testbed for further research and studies,” says Zuckerberg. “The goal of all this work is to help us identify which technical paths are going to allow us to make meaningful enough improvements that we can start approaching visual realism.”

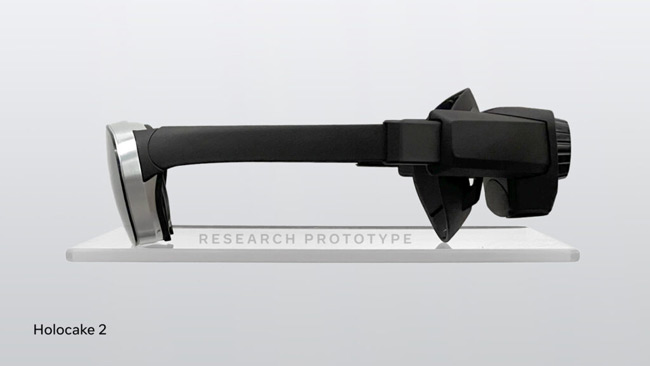

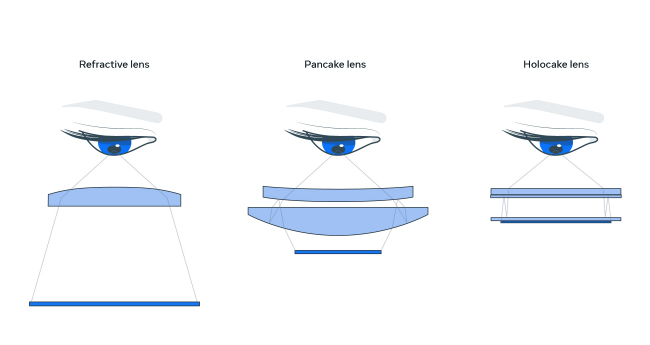

Finally, there’s ‘Holocake 2’—a working experimental VR headset that uses holographic display optics and can already handle playing PC VR experiences. The goal of this prototype is to be able to pack all of Meta’s advanced state-of-the-art technology into the most lightweight and thinnest VR headset possible.

By combining holographic optics and polarization-based optical folding, emulating a “pancake” lens but with a much thinner form factor, to dramatically shorten the path of light from the display to the eye, Meta can substantially reduce the size and weight of the headset and develop a super-compact device.

While the experimental headsets that Zuckerberg showed off briefly during the presentation exist as actual hardware, Meta also revealed one other prototype, referred to as ‘Mirror Lake’, that’s essentially an ambitious concept that still needs to be proven. In time, the goal is to incorporate all these advanced visual technologies into a single device.

“It [Mirror Lake] shows what a complete next-gen display system could look like,” according to Abrash, who later added: “But right now it’s only a concept with no fully functional headset yet built to conclusively prove out this architecture. But if it does pan out though, it will be a game changer for the VR visual experience.”

For a more in-depth overview, you can see Zuckerberg and Reality Labs Chief Scientist Michael Abrash discuss more on the challenges and the possibilities of each experimental prototype here in the video below:

Anduril and Meta have teamed up to make the world's best AR and VR systems for the United States Military.

— Palmer Luckey (@PalmerLuckey) May 29, 2025

Leveraging Meta's massive investments in XR technology for our troops will save countless lives and dollars. pic.twitter.com/t9d2vRInSe